AI / ML

GPU infrastructure for the full AI lifecycle — from development environments and distributed training to fine-tuning and production inference — without the waste of dedicated clusters sitting idle between runs.

AI/ML infrastructure outcomes

More researchers per GPU node — time slicing lets multiple training jobs, notebooks, and inference endpoints share expensive accelerators

From request to running GPU environment — not days of waiting on IT tickets, JIRA queues, and manual provisioning cycles

Zero

Data leaves your environment — customer-hosted deployment for air-gapped, classified, and regulated AI workloads

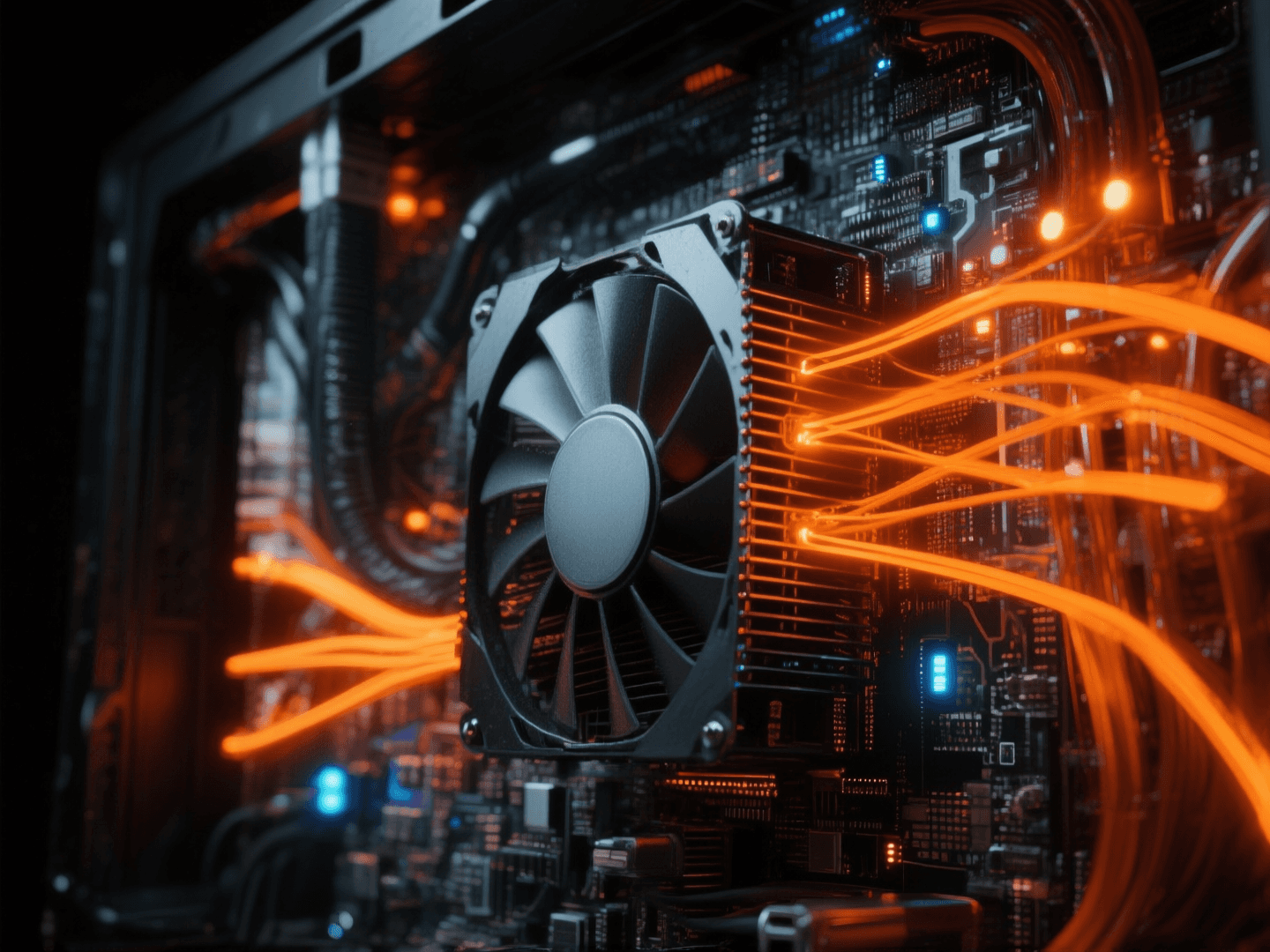

Built for GPU-intensive AI

Infrastructure that understands AI workloads

🔬

GPU time slicing for development

A single H100 can serve four concurrent Jupyter notebooks, each with guaranteed GPU access. Researchers iterate on models without waiting in queue — and your $40K accelerators stop sitting at 15% utilization between training runs.

🔀

Distributed training orchestration

Multi-node PyTorch, JAX, and TensorFlow training jobs scheduled across your GPU fleet automatically. Orion handles node allocation and network topology awareness — your ML engineers focus on model architecture, not infrastructure YAML.

🔒

Air-gapped and secure deployment

Train and fine-tune models on sensitive data without it ever leaving your environment. Orion deploys fully on-premises with no cloud dependency — purpose-built for defense, intelligence, and regulated industries where data sovereignty is non-negotiable.

🚀

Inference and model serving

Deploy inference endpoints alongside training workloads on the same infrastructure. Orion dynamically allocates GPU fractions based on demand — scale serving capacity up during peak hours and reclaim resources for training overnight, automatically.

Common AI workloads

LLM fine-tuning

LoRA, QLoRA, and full fine-tuning of foundation models on your own data — with GPU time slicing for concurrent experiments

Computer vision

Object detection, segmentation, and image generation models — multi-GPU training with automatic compute orchestration across your fleet

RAG pipelines

Embedding generation, vector indexing, and retrieval-augmented inference — co-located on the same GPU infrastructure as your models

Reinforcement learning

RLHF and reward model training with dynamic GPU scaling — burst to multi-node when experiments demand it, scale back when idle

Classified AI processing

Air-gapped LLM deployment for defense and intelligence — on-prem infrastructure with zero external network dependency and full data sovereignty

Model eval & benchmarking

Spin up ephemeral GPU environments for model comparison and evaluation — tear down automatically when benchmarks complete

Your next AI breakthrough starts here.

Four researchers per GPU node. Sixty seconds from request to running environment. No IT tickets, no queue. Talk to our team about putting that to work for your ML team.

Production-ready in days, not months

Most teams ship their first production workload on Orion within a week.

Scales from studio to enterprise

A single VFX studio or a national research network — no architectural rework required.